📊 Strategy

The Orchestration Illusion: Why Multi-Model AI Strategies Still Fail

📅 March 1, 2026⏱ 12 min

The $12 Billion Question Nobody’s Asking

Venture capital poured $12 billion into AI agent startups in 2025. Only 10% achieved enterprise ARR above $10 million.

The industry’s explanation: orchestration. If we could just route the right task to the right model — Gemini for visuals, Claude for analysis, GPT for integration — everything would work. Perplexity just launched a platform routing tasks across 19 different AI models. Amazon is building stateful runtime environments on Bedrock. Microsoft is rolling out multi-agent coordination across Copilot. -And 78% of agentic AI pilots still fail before reaching production.*

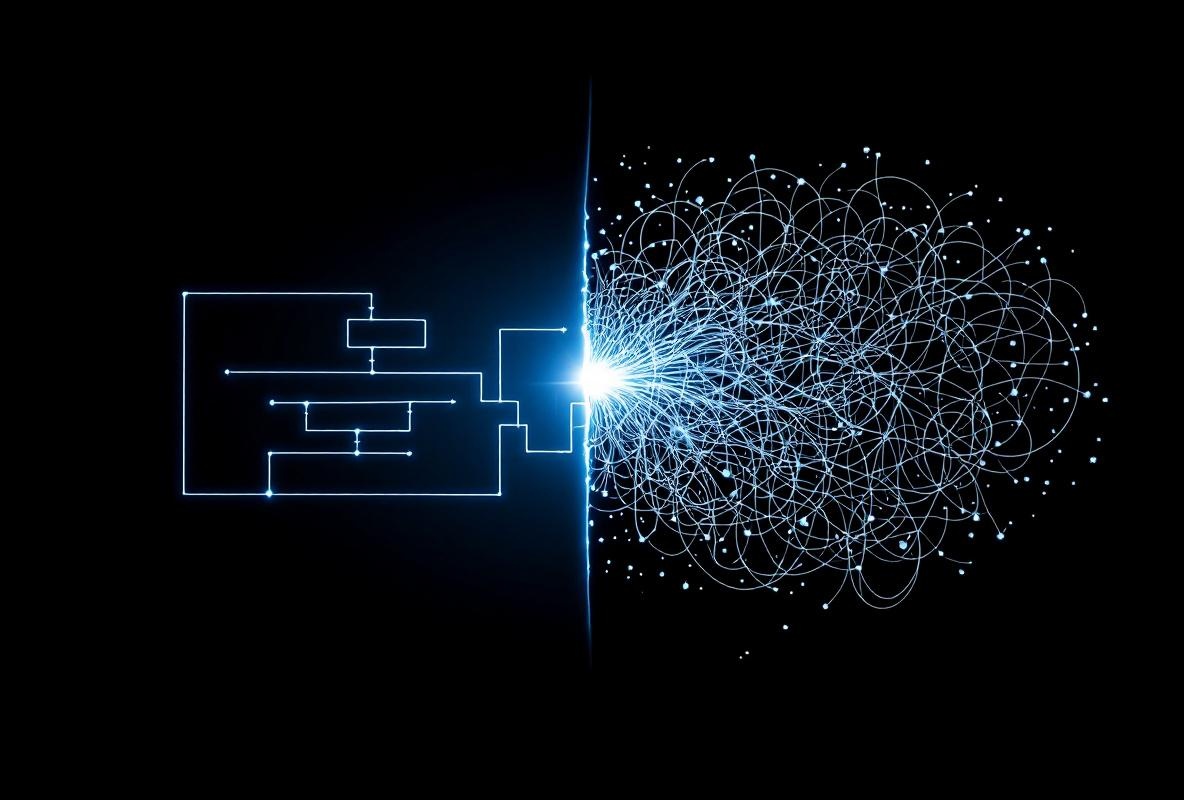

The enterprise AI industry is solving the wrong problem. Not because orchestration doesn’t matter — it does. But because routing a task to the perfect model doesn’t help when that model knows nothing about your organization. The result is what we call The Orchestration Illusion: the belief that model selection and task routing solve enterprise AI, when the actual failure point is organizational context.

The Orchestration Arms Race

Every major platform is building the same thing: an intelligent router that sends each task to the optimal AI model.

Platform

Approach

Models

Key Feature

Amazon Bedrock Agents 2.0

Stateful runtime + model mixing

OpenAI + Anthropic + Amazon Titan

Persistent sessions with state management

Microsoft Copilot

Multi-agent coordination

GPT-4o + custom models

Cross-agent task handoffs

Perplexity Computer

Multi-model orchestration

19 models

Automatic model specialization

Salesforce Agentforce

Low-code agent builder

Multiple via Einstein

Department-specific agent deployment

Google Vertex AI

Agent Builder

Gemini + custom

Google Cloud native orchestration

The logic is seductive: a four-tier model stack where ultra-fast local models handle routine queries, mid-range models manage standard tasks, heavyweight models tackle complex reasoning, and specialized fine-tuned models deliver competitive advantage. Route intelligently, and every task gets the perfect engine.

This is architecturally sound and strategically incomplete.

Why Orchestration Alone Fails: The Evidence

The data tells a consistent story. -Pilot failure rates are accelerating, not declining:*

Metric

2024

2025

Q1 2026

Agentic pilot failure rate

60%

70%

78%

Pilot abandonment rate

45%

55%

62%

Production success rate

25%

20%

18%

Sources: Gartner 2026 AI Adoption Report, McKinsey State of AI 2026, Forrester Enterprise AI Wave 2026

These numbers should alarm every CIO who approved an agent budget. Despite massive improvements in model capability and orchestration tooling, success rates are declining. Models are getting better. Platforms are getting more sophisticated. And enterprise AI is failing more often.

The explanation hiding in plain sight: orchestration complexity is increasing faster than orchestration capability. IBM’s 2026 Agent Orchestration Study found that orchestration is the #1 failure driver, cited in 68% of failed agent pilots. But the orchestration failures aren’t routing failures. They’re context failures.

What Actually Goes Wrong

When researchers dig into why agent pilots fail, the pattern is unmistakable:

- State management failures: Agents lose context across sessions — 50% error rate in long-running tasks like procurement workflows

- Error propagation: One agent’s hallucination cascades through the entire chain — a sales agent misquotes pricing, derailing downstream fulfillment

- Tool integration mismatches: 40% of failures stem from API mismatches, not because the APIs don’t work but because agents lack the business logic to use them correctly

- Reliability collapse at complexity: Top models achieve 85% accuracy on single-tool tasks but drop to 55% on multi-tool workflows

Notice what all four have in common. They aren’t model capability problems. They’re organizational knowledge problems. The agent doesn’t lose computational context — it loses business context. The error cascades because the agent doesn’t understand the organization well enough to catch obvious mistakes. The API mismatch isn’t technical — it’s semantic.

The 29% Problem

Here’s a number that should terrify enterprise security teams: 29% of employees now use unsanctioned AI agents for daily tasks. Not ChatGPT for writing emails. Autonomous agents making decisions, drafting proposals, triaging security alerts, and processing financial data — without any organizational governance.

Meanwhile, 80% of Fortune 500 companies officially use AI agents across sales, finance, security, and operations. The average enterprise now runs 5-10 agents, with leaders deploying 15 or more.

The result is what Gartner calls the agent sprawl crisis:

By 2027, 75% of enterprises will abandon agent pilots without orchestration platforms.

But Gartner’s own data reveals the deeper problem. Enterprises that do have orchestration platforms — the ones routing tasks to the right models with the right fallback logic — are still seeing 62% pilot abandonment. Orchestration reduces infrastructure chaos. It does not solve the context crisis.

The Orchestration Illusion in Practice

Consider a concrete example. An enterprise deploys a multi-agent system for contract review: -*Agent 1 (Claude): Reads the contract, extracts key clauses -*Agent 2 (GPT-4): Compares clauses to standard terms -*Agent 3 (Custom model)**: Flags deviations and risk scores

Beautiful orchestration. Each model plays to its strength. The router sends each task to the optimal engine. The pipeline is fast, reliable, and impressively wrong.

Why? Because:

- Agent 1 doesn’t know your company’s preferred clause language or negotiation history

- Agent 2 compares to generic standard terms, not your organization’s actual terms

- Agent 3 scores risk against industry benchmarks, not your company’s risk appetite

The orchestration is perfect. The context is absent. The output looks authoritative and is subtly, dangerously incorrect. A human reviewer catches the errors — if they’re experienced enough to know what to look for. Many aren’t. According to Forrester, 60% of abandoned pilots cite “unreliable performance” as the primary reason. But the unreliability isn’t in the models. It’s in the gap between what models know and what organizations need them to know.

The Three Layers Every Multi-Agent System Actually Needs

Orchestration solves Layer 1. Most enterprises never build Layers 2 and 3.

Layer 1: Model Orchestration (What Everyone’s Building)

This is the routing layer — matching tasks to models based on capability, latency, cost, and accuracy requirements. It includes:

- Intelligent task classification

- Model selection and load balancing

- Fallback and cascade logic

- Token budgeting and cost optimization

- Multi-provider redundancy -Current state:* Rapidly maturing. Amazon, Microsoft, Google, and dozens of startups are solving this well.

Layer 2: Organizational Context (What Almost Nobody’s Building)

This is the knowledge layer — ensuring every agent understands your organization’s specific processes, terminology, standards, and decision frameworks. It includes:

- Department-specific process documentation

- Organizational hierarchy and approval flows

- Company terminology and definitions

- Historical decision patterns

- Cross-functional dependencies -Current state:* Almost entirely absent. Only 7% of enterprises have data ready for AI (Cloudera/HBR, March 2026). And data readiness is the easiest part of context readiness.

Layer 3: Context Governance (What Nobody’s Even Discussing)

This is the maintenance layer — ensuring organizational context stays accurate, current, and consistently applied across every agent. It includes:

- Context version control and audit trails

- Cross-agent consistency enforcement

- Context drift detection and remediation

- Role-based context access controls

- Feedback loops from agent outputs to context updates -Current state:* Nonexistent at enterprise scale. This is the layer where context engineering becomes an organizational discipline, not a technical implementation.

The Budget Paradox

The spending patterns reveal the illusion clearly.

Gartner estimates $2.5 trillion in global AI spending for 2026. Based on deployment patterns across hundreds of enterprises, the budget allocation looks like this:

Layer

Budget Share

Impact on Agent Success

Model orchestration (infrastructure, APIs, compute)

~70%

Necessary but insufficient

Organizational context (documentation, knowledge management)

~5%

Directly determines output quality

Context governance (maintenance, consistency, audit)

<1%

Determines long-term reliability

Training and change management

~24%

Bridges adoption gap

Seventy percent of the budget goes to deciding which model answers the question. Five percent goes to ensuring any model can answer the question correctly. Less than one percent goes to ensuring the answer stays correct over time.

This is the 93/7 split reborn in a multi-agent world — with the added irony that organizations are now spending more on sophisticated model selection while still not investing in the organizational knowledge that any model needs.

What the Platforms Won’t Tell You

Every multi-agent platform sells the same promise: we’ll handle the complexity so you don’t have to. But there’s a fundamental limitation none of them solve. -Amazon Bedrock’s stateful runtime* maintains computational state — session history, tool call results, permissions. It does not maintain organizational state. An agent can remember what it did in the last session. It cannot learn what your procurement policy changed last week. -Microsoft’s multi-agent coordination* enables agents to hand off tasks to each other. But coordination without shared organizational understanding produces what we’ve called “Securely Useless” agents — governed, coordinated, and confidently incorrect. -Perplexity Computer’s 19-model routing* optimizes for model capability. Send visuals to Gemini, engineering to Claude, integration to GPT. But model specialization without organizational context means every specialized model makes specialized errors that align with its training data, not your business reality. -Salesforce’s Agentforce* comes closest by embedding agents in your CRM data. But CRM data is a fraction of organizational context — it captures customer interactions without capturing the decision frameworks, competitive intelligence, and institutional knowledge that shape how those interactions should be handled.

The platform limitation isn’t a criticism. These are impressive engineering achievements. But they solve the model layer while leaving the context layer to the customer. And almost no customer is building it.

The Orchestration Illusion vs. The Readiness Illusion

In our analysis of the Readiness Illusion, we identified three compounding layers: Data Readiness (7% of enterprises), Organizational Readiness (6% beyond pilot), and Context Readiness (unmeasured).

The Orchestration Illusion is what happens when enterprises try to skip straight to sophisticated multi-agent deployment without addressing those three readiness layers. It’s the technological manifestation of the 47% magical thinking problem — the belief that better tools will compensate for absent foundations.

The connection between the two illusions:

Readiness Illusion

Orchestration Illusion

“Better AI will fix our data quality”

“Better routing will fix our context gap”

Technology investment as substitute for organizational investment

Infrastructure investment as substitute for knowledge investment

93% not ready for AI

78% of pilots fail before production

Compounds silently

Fails loudly in production

Both illusions share the same root cause: the enterprise conviction that the next platform purchase will solve what the last three didn’t. And both have the same remedy — building the organizational context layer that makes every platform investment actually work.

Breaking the Illusion: What Context-First Multi-Agent Systems Look Like

The 18% of enterprises succeeding with agent pilots share a common pattern: they build context before they build orchestration.

Step 1: Organizational Context Inventory

Before selecting models or building routing logic, successful enterprises audit what their agents need to know:

- What decisions do agents need to make?

- What organizational knowledge do those decisions require?

- Where does that knowledge currently live?

- How often does it change?

- Who is responsible for keeping it current?

Step 2: Context Architecture (Not Model Architecture)

Instead of starting with “which model for each task,” start with “which context for each function”:

Function

Critical Context

Context Source

Update Frequency

Sales

Pricing, competitive intel, qualification criteria

CRM + strategy docs

Weekly

Finance

Chart of accounts, budget hierarchy, regulatory requirements

ERP + compliance docs

Monthly

HR

Policies, compliance rules, organizational structure

HRIS + legal docs

Quarterly

Marketing

Brand guidelines, positioning, campaign history

DAM + strategy docs

Monthly

Legal

Clause library, risk appetite, precedent decisions

Contract management + board docs

As needed

Step 3: Context-Informed Orchestration

-Then* build the routing layer — but route based on context availability, not just model capability:

- Can this agent access the organizational context it needs for this task?

- Is that context current and verified?

- Does the agent’s output need to be checked against organizational standards?

- What happens when context is incomplete?

This inverts the typical deployment sequence. Instead of “which model is best for this task,” the question becomes “does any model have enough organizational context to do this task reliably?”

The Agent Governance Gap, Revisited

We identified the Agent Governance Gap in our analysis of Microsoft’s Agent Control Plane: the space between governing what agents do (infrastructure) and what agents know (organizational context).

Multi-model orchestration widens this gap. When you have one model, you have one context problem. When you route across 19 models, you have 19 context problems — each model potentially working with different, incomplete, or contradictory organizational knowledge.

This is why orchestration complexity increases failure rates even as model capability improves. More models means more sophisticated routing means more opportunities for context gaps to produce subtle errors that cascade through multi-step workflows.

The enterprises that will win the multi-agent era aren’t the ones with the most sophisticated routing. They’re the ones that solve the context layer once and apply it consistently across every model in their stack.

What This Means for Enterprise AI Strategy

If you’re planning or evaluating a multi-agent AI deployment, ask these five questions before writing a single line of routing logic:

-

Context inventory: Can you list every piece of organizational knowledge your agents need to function correctly? If not, you’re not ready for multi-agent orchestration.

-

Context currency: Is that organizational knowledge documented, current, and machine-accessible? If it lives in people’s heads, no model can access it — regardless of how well you route.

-

Context consistency: Will every agent in your system work from the same organizational context? Inconsistent context across agents is worse than absent context — it produces conflicting outputs that erode trust.

-

Context governance: Who is responsible for maintaining organizational context as your business changes? Without a maintenance plan, your agents become less accurate every day.

-

Context-first architecture: Are you building context infrastructure before or after model infrastructure? The answer determines whether you join the 18% or the 78%.

The Bottom Line

Multi-model orchestration is an impressive engineering achievement. It solves real infrastructure problems — model selection, cost optimization, fallback logic, multi-provider redundancy. These matter.

But orchestration without organizational context is like having a Formula 1 pit crew that can swap tires in 1.8 seconds — on the wrong car. The execution is flawless. The outcome is useless.

The enterprises that will capture actual value from multi-agent AI aren’t investing in better routing. They’re investing in the organizational context that makes any routing strategy work. They’re building Layer 2 and Layer 3 before optimizing Layer 1.

The orchestration arms race will produce a winner. But the winner won’t be the platform with the most models or the smartest router. It will be the enterprises that solved the one problem every platform leaves to the customer: making their organizational context machine-accessible, current, and governed.

That’s not an orchestration challenge. That’s a context engineering challenge. And it’s the one that separates the 18% from the 78%.

-

- *

Frequently Asked Questions

What is the Orchestration Illusion in enterprise AI?

The Orchestration Illusion is the belief that routing tasks to the optimal AI model — the core promise of multi-model orchestration platforms — solves enterprise AI challenges. In practice, 78% of agentic pilots fail because the bottleneck isn’t model selection but organizational context. Models don’t fail because they’re the wrong model for the task. They fail because they lack the organizational knowledge to complete the task correctly.

Why are multi-agent AI pilot failure rates increasing?

Pilot failure rates rose from 60% in 2024 to 78% in Q1 2026 despite significant improvements in model capability and orchestration tooling. The increase is driven by orchestration complexity — more agents, more models, and more cross-agent handoffs create exponentially more opportunities for context gaps to produce cascading errors. Each additional agent multiplies the context problem.

How do I evaluate whether my organization is ready for multi-agent AI?

Start with a context inventory, not a model evaluation. Can you list the organizational knowledge your agents need? Is that knowledge documented and machine-accessible? Is it current? Is someone responsible for maintaining it? If you can’t answer yes to all four, invest in context infrastructure before orchestration infrastructure.

What’s the difference between model orchestration and context engineering?

Model orchestration determines which AI model handles each task based on capability, cost, and latency. Context engineering ensures that whichever model handles the task has access to the organizational knowledge needed to complete it correctly. Orchestration optimizes the engine. Context engineering provides the fuel.

What does a context-first multi-agent architecture look like?

A context-first architecture builds organizational context infrastructure — documented processes, terminology, decision frameworks, and governance — before building model routing logic. The architecture asks “does any model have enough context to do this task reliably?” before asking “which model is best for this task?” This inverts the typical deployment sequence and dramatically improves pilot success rates.

-

- * -iEnable builds the organizational context layer that makes every AI platform investment actually work. Not a better router — a better foundation. Learn how →*

Ready to govern your AI agents?

iEnable builds governance into every agent from day one. No retrofitting. No trade-offs.